Samsung Avatar: One World

Connecting every voice — exploring novel XR use cases for Samsung Avatars, culminating in an accessibility-first sign language communication tool.

Role

XR Concept Designer

Team

4 People (Abhishek, Saleha, Shubhanshu, Sumesh)

Timeline

6 Weeks

Tools

UE5, Figma, Blender, After Effects

About PRISM & Brief

Students and professors form teams to work on smaller projects — "work-lets" — under guidance from mentors at Samsung R&D Institute, Bengaluru (SRI-B). The initiative sharpens skills and opens doors to better placements.

Samsung Brief

Avatars are key for self-expression and virtual communication — they extend a user's identity. XR adds depth, 3D interaction, gestures, and multi-modality. This project explores novel use cases for Samsung Avatars in XR, integrating them with Samsung's app ecosystem beyond mobile and into the immersive XR space.

6

Weeks

Duration

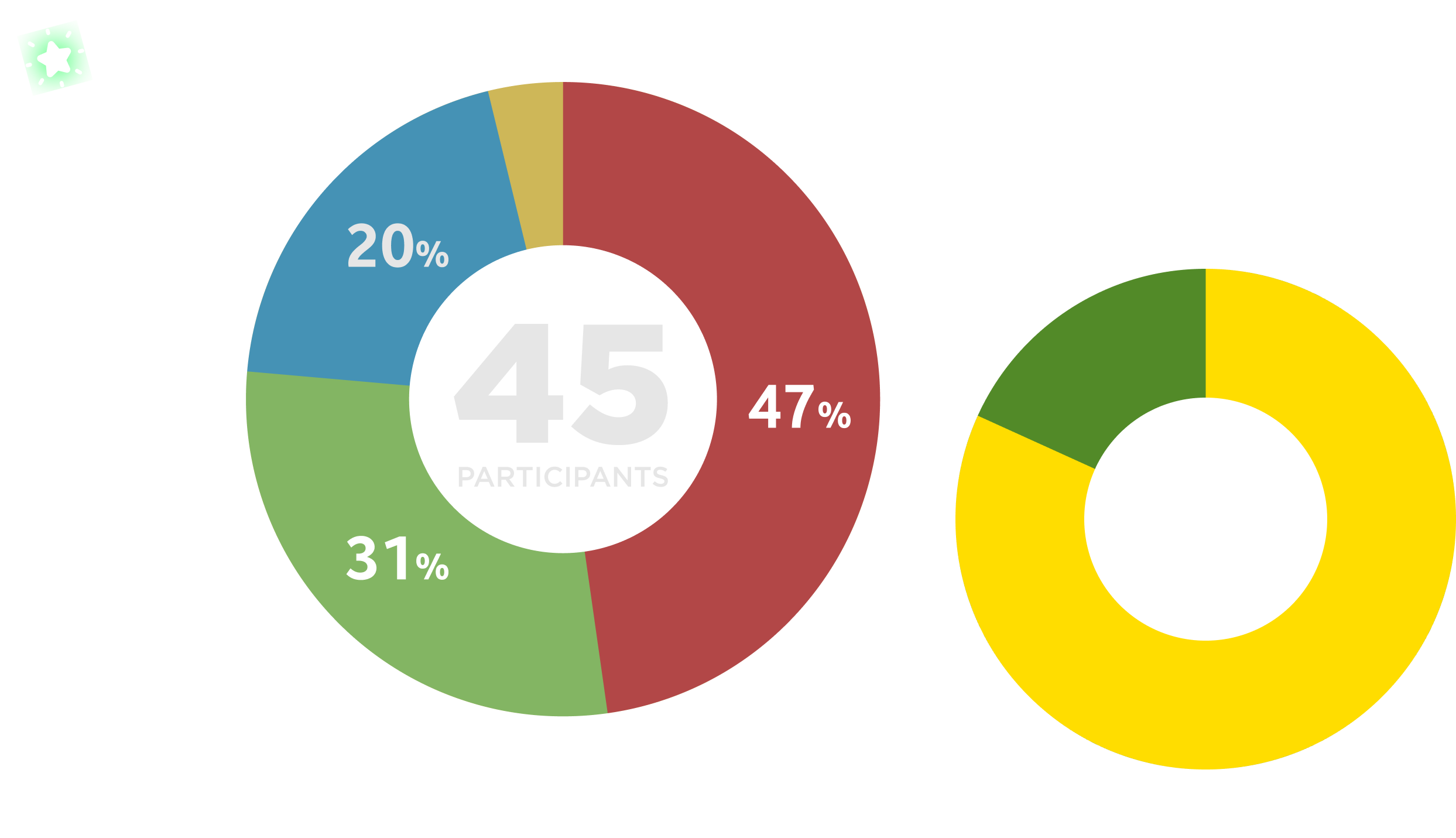

45

Participants surveyed

Research

5

Concepts generated

Ideation

1

Final Solution

Delivery

Concept Video

My Responsibilities

As the lead XR Concept Designer for this Samsung PRISM project, I steered the design strategy from initial desk research to the final high-fidelity prototype video.

Primary Research

Conducted surveys with 45+ participants and synthesized interview data into actionable insights.

Concept Design

Ideated 5+ unique use cases for Samsung Avatars, eventually pivoting to a high-impact accessibility solution.

UI/UX Strategy

Designed the VR-first interaction dictionary and multi-phase user workflows.

Prototyping & Motion

Directed and edited the final high-fidelity prototype video demonstrating the Avatar Translator in action.

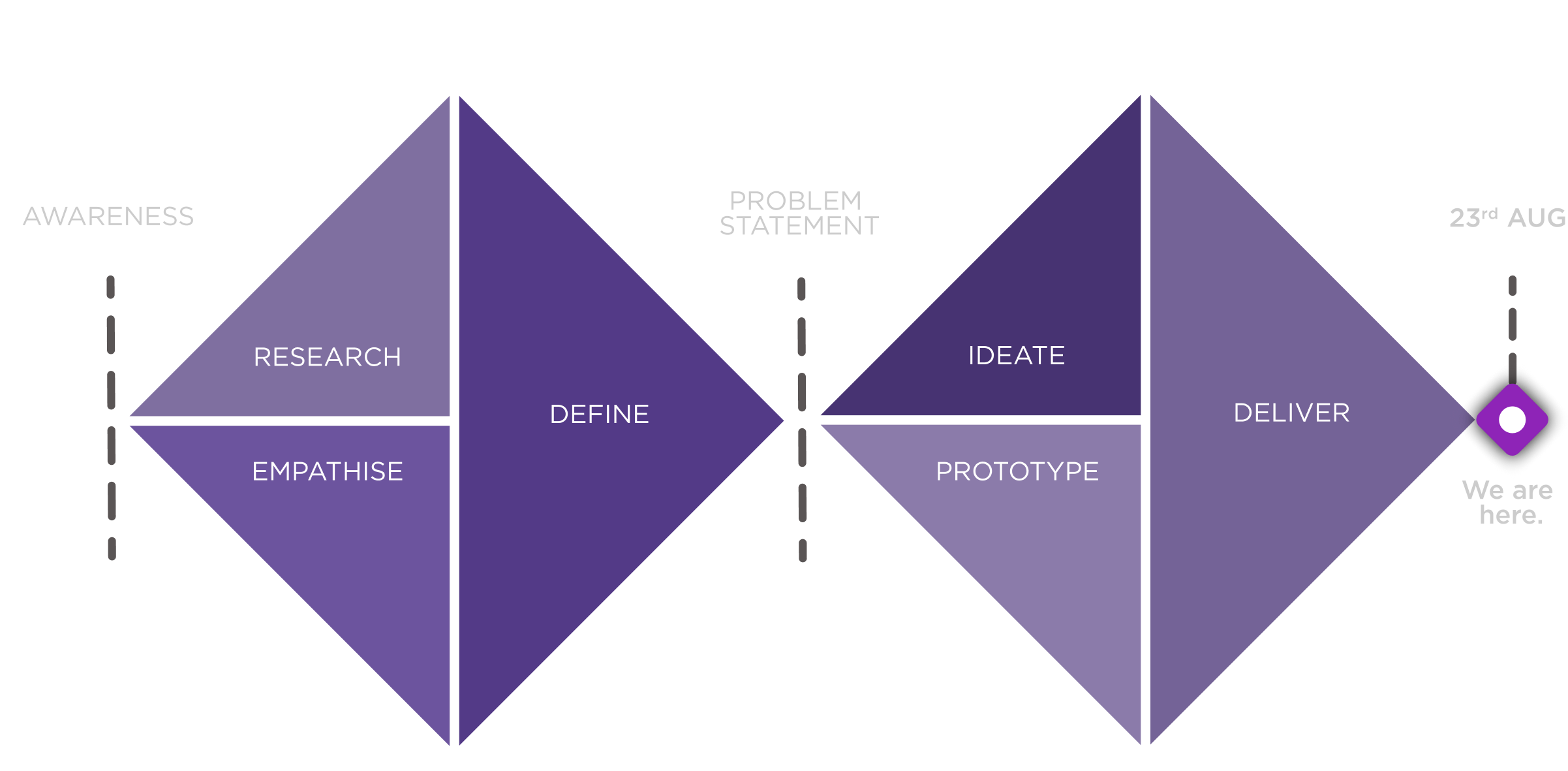

Design Methodology

A Double Diamond–led sprint — 4 weeks of research and definition, converging into ideation and delivery by the 23rd August deadline.

Timeline

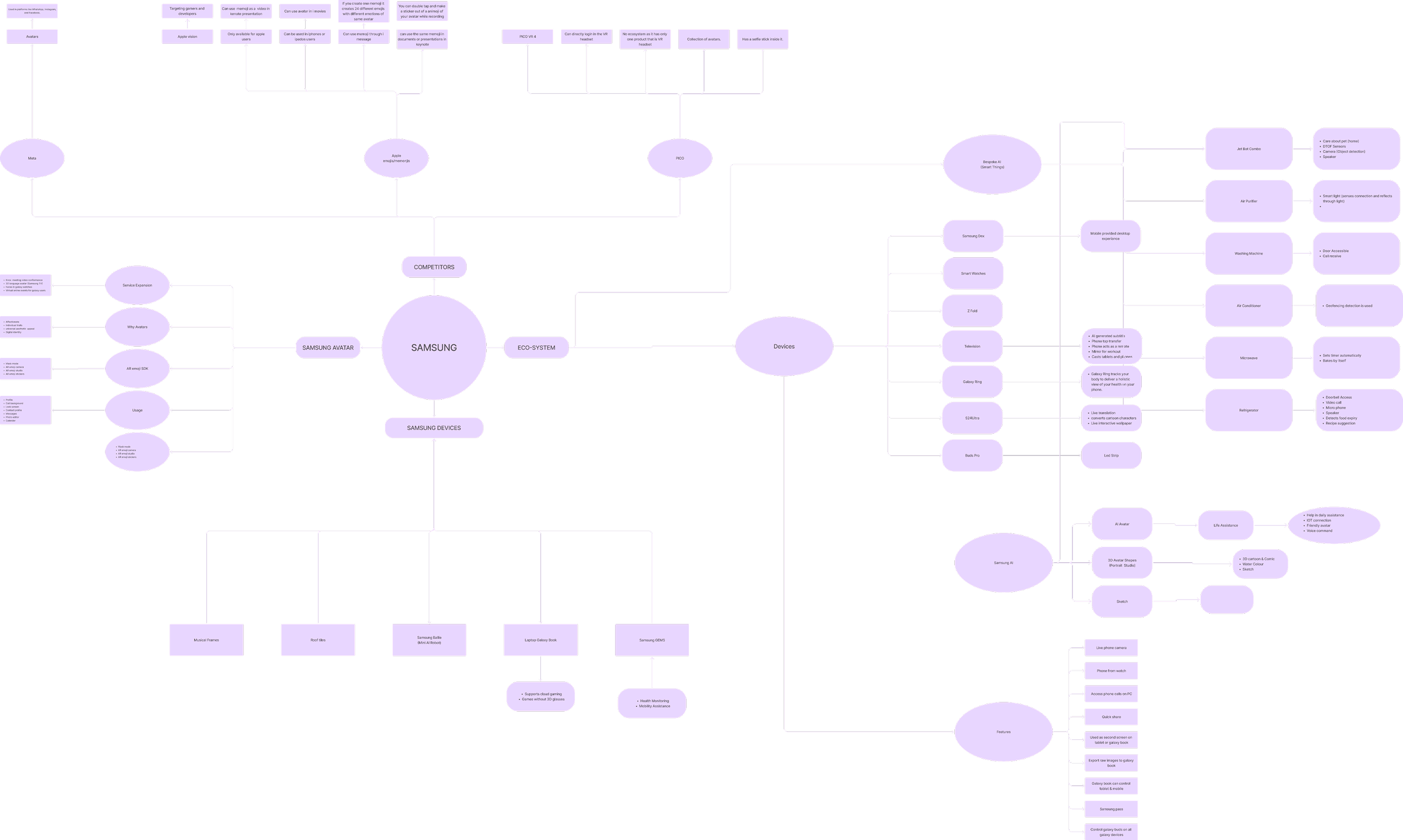

Information Gathered from Online Sources

XR Devices — Avatar Landscape

Three major XR platforms benchmarked across tracking, ecosystem compatibility, customisation, and realism.

Identifying Stakeholders

Defining the stakeholders.

Primary

Secondary

Inclusive Gen-Z's and Millennials

Interview Questions

Which gadget and brand device do you use?

Which devices do you currently own or use?

Do you know about any avatar/memoji feature currently available on your device?

If yes, for what purposes do you use avatar/memoji as a features?

How would you rate your interest in using avatars for the following purposes?

What would be your interest in using avatars for the following purposes?

Ideas where VR could be integrated with your ecosystem? ( to ease your life )

Any specific feature that you want on your avatar or any task/ interaction to be performed?

Research Insights

Gen-Z users have Samsung & Apple Branded gadgets

Major user's gadgets belong to Apple Brand

Millennials have different devices with different brands

Gen-Z have different devices of different brands

Avatar usage found in social media apps (Snapchat) & gaming

Avatar usage found in social media; current Avatars look cartoonish

Future usage on social media interaction, virtual meeting & shopping

Single point virtual avatar expressing body language & voice

Virtual assistance to handle everyday tasks at home & smart home management

Virtual assistance with food command and guide map integration

Problem Statement

Very few Samsung device users are aware of the avatar features available in AR Zone, leading to underutilisation of avatar within the Samsung ecosystem, including the XR domain. We need to explore opportunities for integrating avatars more effectively & increasing user engagement by understanding the needs and preferences of Gen Z.

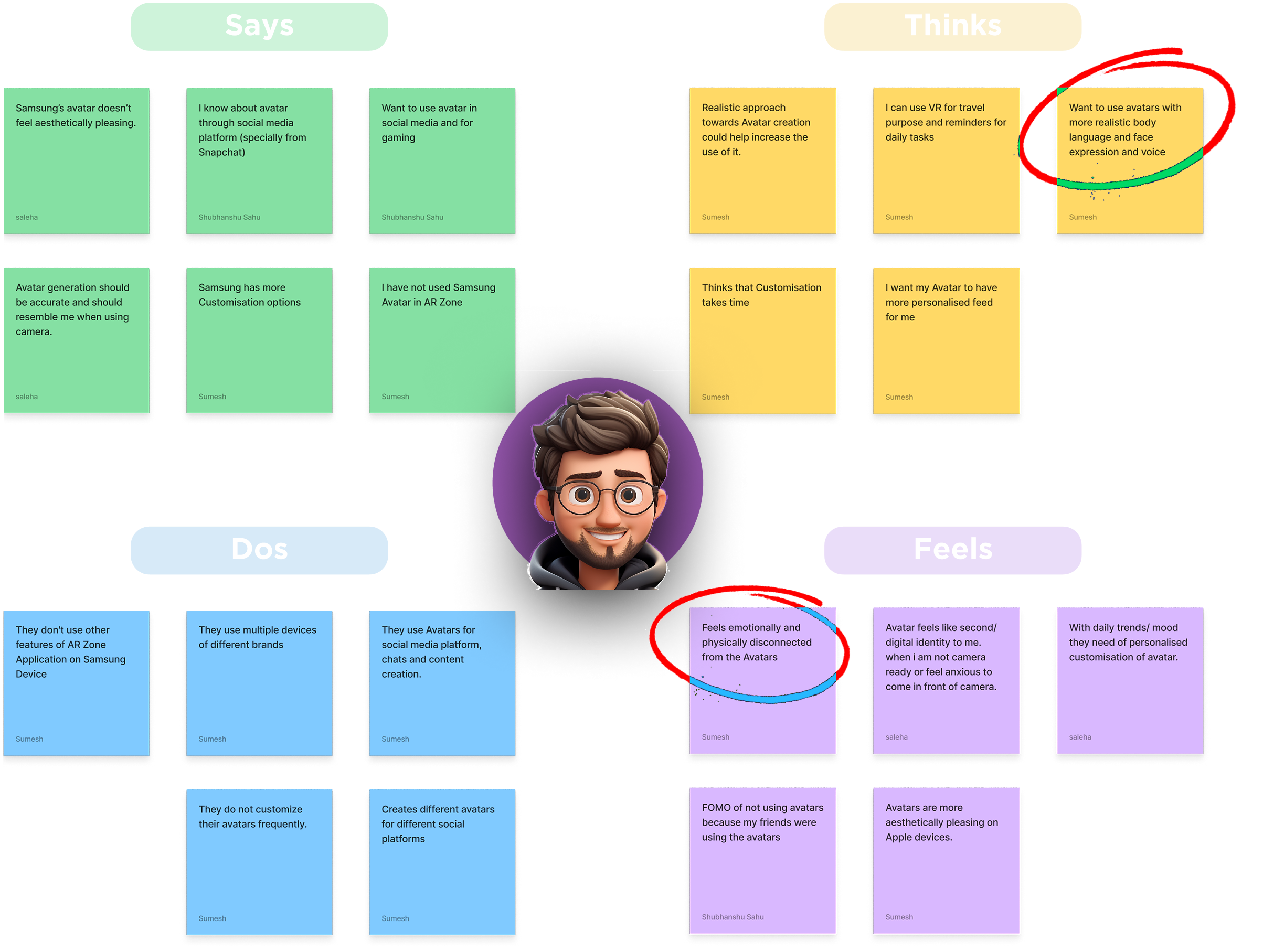

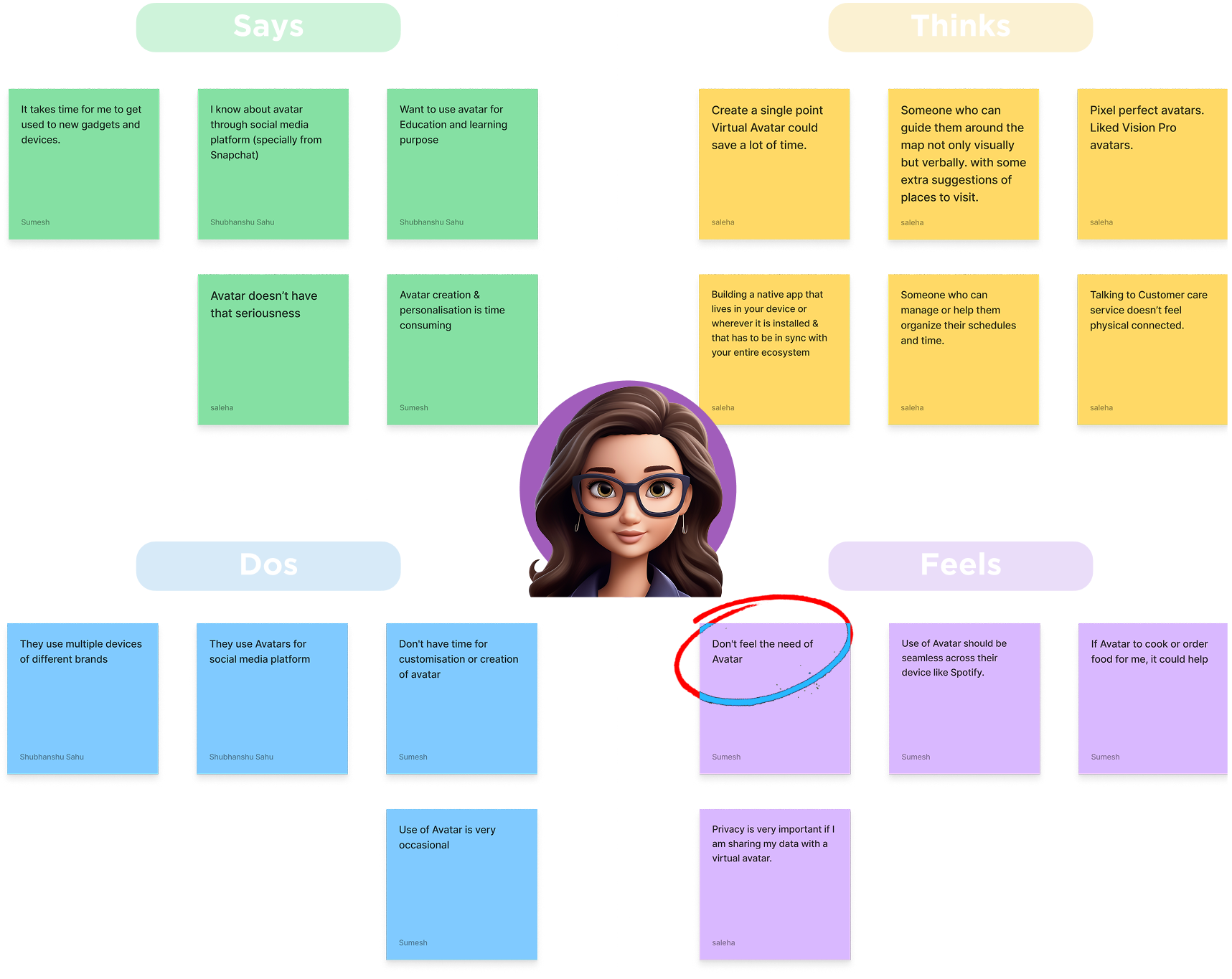

Empathy Mapping

Mapping out the desires, frustrations, and device behaviors of our two primary demographic segments to find overlapping pain points.

Gen-Z Students

Millennial Professionals

Concepts

Round 1 — Initial Ideas

Samsung Virtual Store Assisted by an Avatar

Avatar-assisted virtual Samsung retail experience.

3D Custom Asset & Virtual Pet

Personalised avatar companion that lives across devices.

3D Avatar Comic stories / AI Generator

Avatar comic stories auto-generated from photos in your Samsung gallery.

Round 2 — Rethink: Avatar as Hero

Live stream your Avatar in VR Games

3D Avatar Comic stories / AI Generated video clips

Talking Avatar for your Voice mail

Product Avatars of Samsung Ecosystem

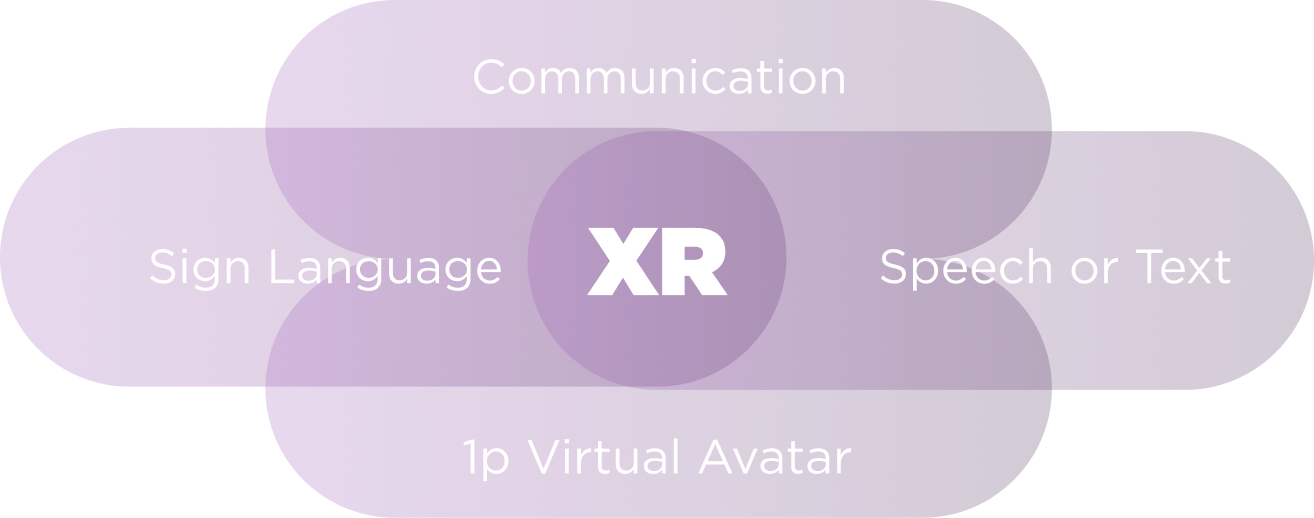

Sign Language for Deaf & Mute individual

We want to understand how D&M communicate?

Can VR domain provide a probable solution & help their communication problem?

Avatar feature could provide most value to the users.

Why we choose Accessibility?

Samsung Philosophy

Samsung designs their products with accessibility in mind & believes that everyone should have equal access to technology, regardless of their abilities.

Strategic Aim

Our aim is to bridge the communication gap within deaf & mute individuals with the help of an avatar-based communication tool.

Goal of our Research

Our primary goal was to deeply understand the communication needs and lived experiences of deaf & mute individuals.

Developing solutions that simplify and enhance their everyday communication experiences through XR.

Exploring the nuances of sign language, lip reading, and writing across diverse regions and cultures.

How might we utilize Samsung's existing Avatar ecosystem to create a real-time sign language translation tool that empowers deaf and mute individuals to communicate more naturally in immersive virtual spaces?

Research Insights.

Communication through sign language, lip reading & writing.

Ways of learning sign language varies by region, state, and nation.

Lip reading cannot be understood by every person.

Eager to connect with relatives and guests in-person or via Video Calls.

Enjoys watching movies and engaging with new people in their environment.

Emotions conveyed via touch, facial expressions, and digital cues.

Visual and emotional representation is critical for effective communication.

Highly interested in exploring and using the latest gadget ecosystems.

Proficient in digital creation, gaming, and smartphone multitasking.

Major Pain points.

Communication Barriers:

- •Difficulty in understanding sign language from regions.

- •Challenges in lip reading, especially with new individuals.

Emotional Expression:

- •Limited ability to convey emotions through traditional communication methods.

- •Reliance on high facial emotions, touching, and crying to express feelings.

Lack of Standardization:

- •Variations in sign language learning methods from person to person, state to state, and nation to nation.

Opportunities.

Avatar Integration

Recognize and translate regional sign languages.

Convey and interpret emotions effectively.

Communication Tools

Sign language-to-speech and speech-to-sign language functionalities.

Social Connectivity

Video calls with avatars that bridge communication gaps between deaf, mute, and hearing individuals.

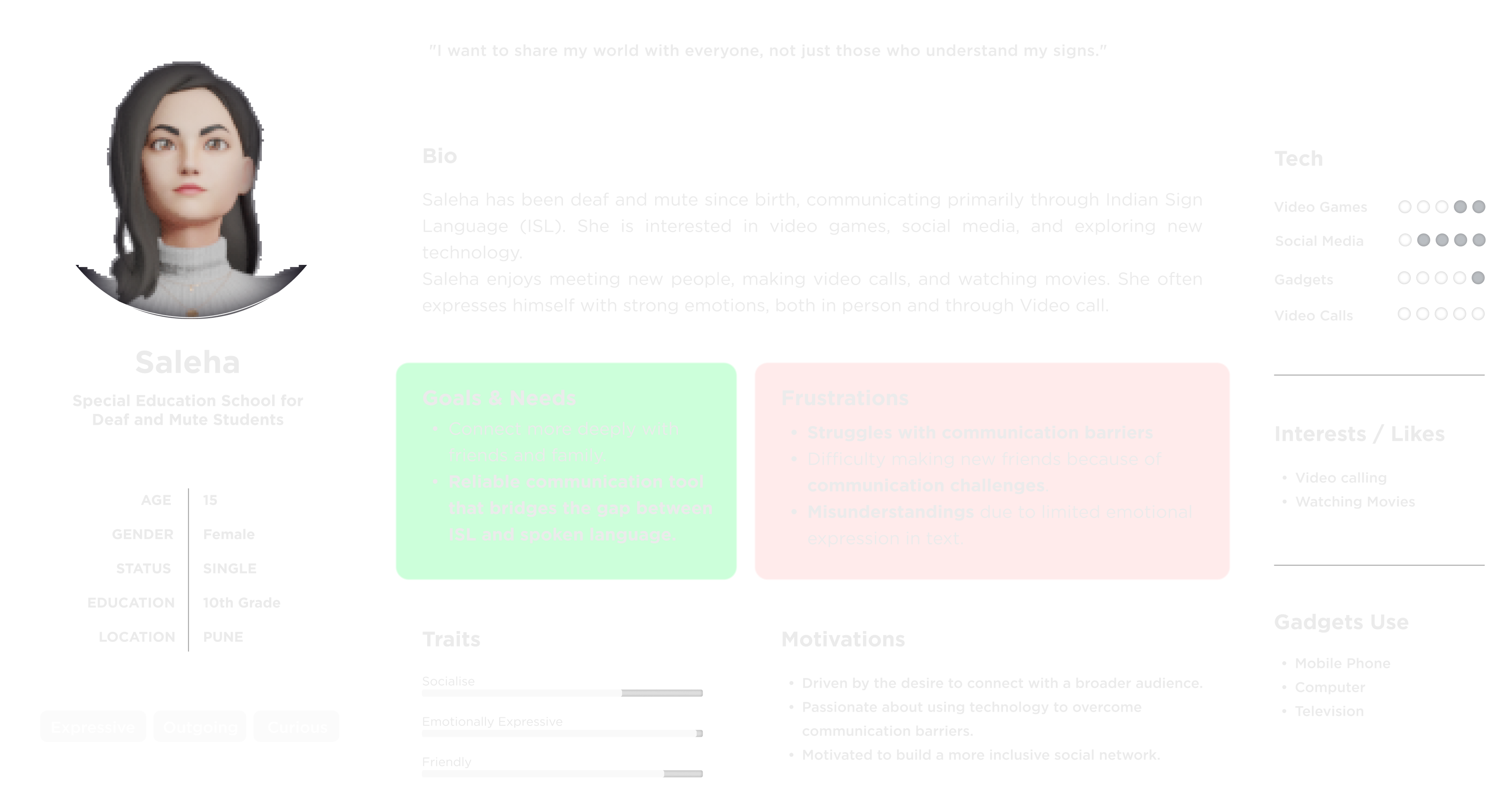

Deaf & Mute Student

After pivoting to accessibility, we built a persona grounded in real research on deaf and mute communication needs — not assumptions.

Revised Problem Statement.

Deaf and mute individuals face communication challenges with hearing people due to varying sign languages. We aim to bridge this gap by developing inclusive avatar features for Samsung XR devices, enabling efficient real-time translation and enhanced emotional expression for a truly connected interaction.

Other Interactions

Custom Sign Dictionaries

Users can create custom sign gestures to their dictionaries, which the avatar can use to perform specific words/phrases relevant to their needs.

Multi-Language Translation

Seamlessly translate between different regional and international languages. Users can hear other individuals' speech translated into their own regional language through their personal avatar.

Technical Considerations

Precise Upper Body Tracking

Speed Adjustment of Avatar

Repetition feature

Avatar translator feature for Deaf & Mute individuals

Overview : This use case aims to facilitate real-time communication between Deaf and mute individuals within a VR environment.

Entry Point : Deaf and Mute user enters a VR environment (Google Meet) and initiates a session.

Novel Interactions:

- Avatar-Based Sign Language Translation : Deaf users will have avatars capable of Translating sign language gestures to speech in real-time for the hearing participants.

- AI-Powered Sign Language Recognition : Smart & advanced AI will accurately recognise and help interpret sign language gestures.

Exit Point : Users end the communication session and exits the VR environment.

User Flow

Phase 01: Setup & Recording

Phase 02: Calibration & Enabling

Phase 03: The Meeting

Saleha has a meeting to attend.

To test the added gestures.

Clicks on "Google Meet Application".

Puts on the Samsung VR headset and turns it on.

Clicks the test button for one of the added gestures in the dictionary (I am sorry).

Enable the accessibility features from Samsung XR headset settings in Navigation Panel.

Goes to settings.

Performs the gestures and checks them.

Clicks on "New Meeting" to generate a meeting link.

Opens the "Gesture Dictionary."

The gestures could be edited/ saved if now changes are made.

Share the link with the participant to join the meeting.

"Add New Gesture".

Then in settings "Accessibility Section"

The participant joins, and the meeting begins.

"Record New Gesture".

"Hearing enhancement".

Saleha makes gestures that are converted into speech by the avatar.

The device asks permission to use the camera.

"Preferred Language" turns on the feature.

Abhishek speaks, and his speech is converted into sign language.

"Enable Device Camera".

A pop-up comes indicating "Avatar Translator Feature is Off".

At the end of the meeting, Saleha selects the Heart gesture from the reaction options.

Press X to start the recording.

"Enable Avatar Translator".

Heart emojis appear around the avatar, conveying user's emotions.

Press Y to finish the recording.

The feature is enabled.

The meeting ends.

Type a phrase for the gesture in the Text panel.

Chooses the preferred language, ASL (American Sign Language).

"Save Gestures".

Closes the setting tab.

Final Prototype.

Future Scope

Emotional Intelligence

Recognise and convey emotions in both spoken and sign language, fostering empathetic communication.

Context-Aware Translations

Understand conversation contexts (e.g., formal, casual) for more accurate and appropriate sign language translations.

AR Integration

Enabling users to see the avatar's sign language translations and real-time visual support in educational settings.

Learnings

Incorporating Feedbacks

Continuous user feedback

Problem Solving

Addressing challenges related to accessibility

Future Forward Thinking

Exploring technology innovation

Project Management

Coordinating, managing timelines

Final thought

"The most powerful thing a Samsung Avatar can do isn't look like you. It's speak for you — especially when the world hasn't given everyone an equal voice."

— Shubhanshu Sahu

Previous Project

RGZP Zoo SystemsNext Project

FlytBase